Amazon OpenAI Alliance

The updates regarding Gemini 3.1 Pro and the OpenAI-Amazon deal represent two of the biggest shifts in the industry: one focusing on near-human reasoning levels and the other on the massive infrastructure required to power the next generation of agents.

Gemini 3.1 Pro: The Reasoning Powerhouse

Released on February 19, 2026, Gemini 3.1 Pro is Google’s attempt to bridge the gap between “fast chat” and “deep thinking.”

- ARC-AGI-2 Breakthrough: It achieved a verified score of 77.1% on the ARC-AGI-2 benchmark—more than double the performance of Gemini 3 Pro. This test measures a model’s ability to solve entirely new logic puzzles it hasn’t seen in training.

- The “Thinking” Gear System: The model introduces a tiered thinking system. You can now choose between:

- Fast: For quick, everyday tasks.

- Thinking: For moderate complexity.

- Pro: For the deepest reasoning and complex scientific/coding tasks.

- Code-Based Visuals: A major new feature is Native SVG and Code Rendering. It doesn’t just describe visuals; it can generate and animate website-ready SVGs or 3D code structures (like an interactive dashboard or a simulation) directly in the interface.

- Expanded Capacity: While it keeps the 1M token input, the output limit has been boosted to 64,000 tokens, solving the “truncation” issue where long code files would get cut off mid-way.

☁️ The Amazon-OpenAI Strategic Alliance

Announced yesterday, February 27, 2026, this $110 billion “mega-round” deal fundamentally changes OpenAI’s relationship with cloud providers.

- The Investment: Amazon is leading with a $50 billion commitment (starting with $15bn upfront). Other participants include Nvidia ($30bn) and SoftBank ($30bn), valuing OpenAI at a staggering $840 billion (pre-money $730bn).

- 2 Gigawatts of Trainium: OpenAI has committed to consuming 2 gigawatts of compute capacity powered by Amazon’s custom Trainium chips (specifically Trainium3 and the upcoming Trainium4). This reduces reliance on Nvidia’s H-series and H-series chips, which are more expensive and harder to source.

- Exclusive Distribution: AWS will now be the exclusive third-party cloud provider for OpenAI Frontier, a new platform designed to help enterprises build and manage teams of autonomous AI agents.

- Stateful Runtime Environment: Amazon and OpenAI are co-developing a system that allows AI agents to have a “memory” of prior work and identity, allowing them to work across software tools and data sources like a human employee would.

Note on Microsoft: Despite this massive pivot toward Amazon (AWS), OpenAI and Microsoft released a joint statement confirming their relationship remains “strong and central,” though it’s clear OpenAI is aggressively diversifying its infrastructure.

The Amazon-OpenAI Strategic Alliance, announced on February 27, 2026, is one of the largest and most consequential deals in the history of the technology industry.

It represents a $110 billion investment in OpenAI, valuing the company at $840 billion, and fundamentally reshapes the competitive landscape for AI infrastructure and enterprise distribution.

Here is a detailed breakdown of the alliance’s components, implications, and how it affects the existing Microsoft partnership.

1. The Financial Investment: A Historic “Mega-Round”

OpenAI raised $110 billion in new capital, a record-breaking figure for a private technology company.

- Amazon’s Commitment: Amazon led the round with a massive $50 billion investment.

- Structure: The investment starts with an initial $15 billion check, followed by another $35 billion over the coming months as certain conditions are met.

- Other Major Participants:

- Nvidia: Invested $30 billion.

- SoftBank Group: Invested $30 billion.

- Pre-Money Valuation: The round valued OpenAI at $730 billion before the new cash infusion, jumping to a post-money valuation of $840 billion.

2. Infrastructure: The Shift to Trainium and AWS

The core of the technical alliance is OpenAI’s aggressive diversification of its computing infrastructure. While Microsoft Azure remains a central partner, OpenAI is shifting a massive portion of its workloads to Amazon Web Services (AWS).

The Trainium Deal

OpenAI has committed to consuming 2 gigawatts (GW) of computing capacity powered by Amazon’s custom AI chips, Trainium.

- Why this matters: This reduces OpenAI’s near-exclusive reliance on Nvidia’s H-series GPUs, which are expensive and have suffered from chronic supply shortages.

- Chip Generations: The commitment spans current Trainium3 chips and next-generation Trainium4 chips (expected in 2027), which are designed for extreme scale model training and inference.

- Cost Efficiency: By using Amazon’s vertically integrated silicon, OpenAI aims to significantly lower the long-term cost of producing intelligence at scale.

Expanded Cloud Agreement

OpenAI and AWS expanded their existing cloud services agreement by an additional $100 billion over the next 8 years.

3. Technology & Products: Co-Developing the “Agentic OS”

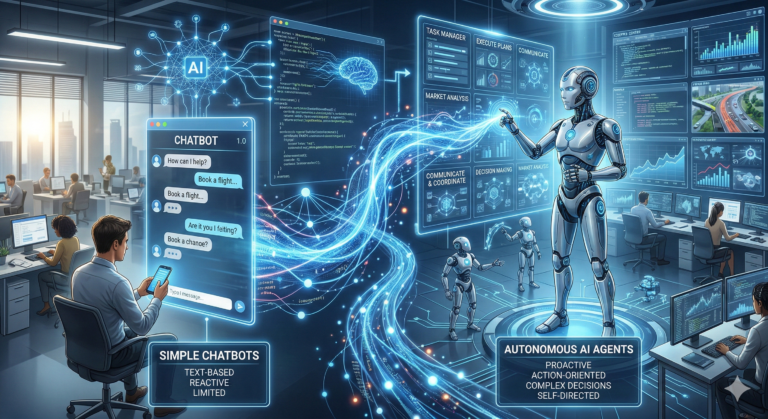

The alliance is co-creating the necessary software stack to move AI from single chats to multi-step, autonomous agentic workflows.

A. Stateful Runtime Environment (SRE)

A breakthrough announcement is the joint development of a Stateful Runtime Environment, which will run natively within Amazon Bedrock.

- The Concept: Traditional AI models are “stateless”—they forget everything once a chat ends. SRE gives agents a persistent “memory” and “identity.”

- Capabilities: SRE enables AI systems to retain context from prior tasks, remember previous work, collaborate across software tools and data sources, and manage ongoing workflows and projects that last for days or weeks.

B. OpenAI Frontier Platform

AWS will now serve as the exclusive third-party cloud distribution provider for OpenAI Frontier.

- What it is: Frontier is OpenAI’s advanced enterprise platform designed to help organizations build, deploy, and manage entire teams of autonomous AI agents.

- Enterprise Focus: Frontier offers shared context, built-in governance, and enterprise-grade security, making it easier for large companies to move from experimentation to production AI.

C. Customized Models for Amazon

OpenAI and Amazon will collaborate to develop customized models tailored for Amazon’s own developers. These models will power Amazon’s vast ecosystem of customer-facing applications, including e-commerce, Alexa, and logistics.

4. The Microsoft Relationship: Partner, or Competitor?

The alliance creates an intricate and potentially tense relationship between OpenAI, Amazon, and Microsoft.

Joint Statement of “Strength”

Microsoft and OpenAI immediately released a joint statement reassuring the market that their relationship remains “strong and central.”

The New Structure

Under the new agreements:

- Microsoft Azure: Remains the exclusive cloud provider for OpenAI’s stateless public APIs and first-party products like ChatGPT.

- AWS: Is the exclusive third-party cloud provider for the OpenAI Frontier enterprise agent platform and the Stateful Runtime Environment.

Analysis of the Shift

While Microsoft retains its exclusive license to OpenAI’s intellectual property (IP) and receives a share of revenue from all partnerships, OpenAI has effectively broken Microsoft’s monopoly on its infrastructure. This diversification grants OpenAI more bargaining power and security in accessing massive compute capacity.