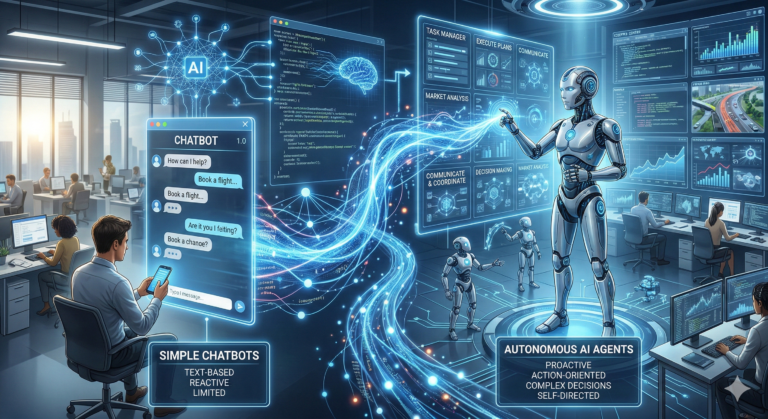

2025 was a massive year for Gemini, marked by a rapid evolution from a powerful chatbot to a sophisticated “agentic” system. The year saw three major model generations (2.0, 2.5, and 3.0) and a total overhaul of how AI interacts with the Google ecosystem.

Timeline of Major Model Releases

- Gemini 2.0 (January/February): Kicked off the year with Gemini 2.0 Flash as the new default, prioritizing speed and multimodal reasoning. Gemini 2.0 Pro followed, setting new benchmarks for coding and complex logic.

- Gemini 2.5 (March/May): Introduced the “Deep Think” reasoning mode, allowing the model to show its thought process. It also brought 1M+ token context windows to the mainstream, enabling users to analyze massive documents and codebases.

- Gemini 3.0 (November/December): The “Intelligence for a New Era” update. Gemini 3 became the most powerful model family to date, featuring advanced agentic capabilities where the AI can plan, execute, and verify multi-step tasks autonomously.

Key Feature & Tool Highlights

- Agentic Intelligence: Tools like Google Antigravity launched, allowing developers to build AI agents that don’t just chat but actually do work—like browsing the web to book a trip or managing complex workflows.

- Multimedia Revolution: * Veo 3: A state-of-the-art video generation model capable of high-fidelity clips with native audio.

- Nano Banana Pro: A high-end image generation and editing model built on Gemini 3, focusing on studio-quality realism and precise text rendering.

- Lyria 2: Advanced text-to-music generation.

- Search & Maps Integration: AI Mode in Search became the standard, allowing for deep, conversational follow-ups. Gemini Live also integrated into Google Maps, enabling a hands-free, conversational driving experience.

- Personal Intelligence: A major privacy-focused feature allowed Gemini to securely connect to your Gmail, Photos, and YouTube data to answer personal questions like, “When does my flight land and which hotel did I book?”

Ecosystem & Industry Impact

- Apple Partnership: One of the biggest headlines of the year was Apple officially partnering with Google to use Gemini as a foundation for next-generation Siri features.

- Workspace for Everyone: Gemini features (Help Me Write, Summarize, etc.) became natively integrated and free for most Google Workspace users.

- Gemini Deep Research: A specialized agent launched in late 2025 that can autonomously conduct hours of academic or professional research and synthesize it into a formatted report.

2025 was a watershed year for Google’s Gemini, defined by its evolution from a powerful conversational AI into an “agentic” system capable of planning and executing complex tasks on your behalf. The year saw a rapid succession of model upgrades, deep integration across Google’s ecosystem, and major industry partnerships.+1

Here are the key highlights for Gemini in 2025:

The Rise of Agentic Models: From 2.0 to 3.0

The year was characterized by an aggressive release schedule that pushed the boundaries of AI reasoning and speed.

- Gemini 2.0 & 2.5 (Early-Mid 2025): The year began with Gemini 2.0 Flash becoming the new default model, prioritizing speed and efficiency. Gemini 2.5 followed, introducing a “thinking model” capable of more complex reasoning and a massive 1 million token context window, allowing for the analysis of huge documents and codebases.+1

- Gemini 3 Series (Late 2025): The year culminated with the launch of the Gemini 3 family, the most powerful to date. This included Gemini 3 Flash for lightning-fast everyday tasks, Gemini 3 Pro for complex challenges, and a specialized Gemini 3 Deep Think mode for advanced problem-solving in science, math, and coding.

Breakthrough Features & Tools

Beyond raw model power, 2025 introduced tools that changed how people interact with AI:

- Deep Research Agent: A new capability that allows Gemini to autonomously plan and execute multi-step research tasks, synthesizing information from various sources into a comprehensive report.

- Veo 3 & Imagen 4: Significant leaps in creative AI. Veo 3 introduced state-of-the-art video generation with native audio support, while Imagen 4 delivered even higher-fidelity image generation and editing.

- Personal Intelligence: A major privacy-focused update enabled users to securely connect Gemini to their personal Google apps like Gmail, Photos, and Calendar. This allowed for highly personalized queries like, “Find the flight confirmation email from last week and add it to my calendar.”

- Google Antigravity: A new platform that ushered in a new era of AI-assisted software development, moving beyond simple code completion to more collaborative and autonomous coding agents.

Integration Everywhere & Industry Shifts

Gemini became the unified intelligence layer across Google’s products and beyond.

- AI-Powered Google Ecosystem: Gemini was deeply integrated into Google Search with a new “AI Mode,” became the default assistant on Android, and was embedded directly into Chrome for on-page assistance. Its capabilities in Workspace apps like Docs and Sheets were also significantly expanded.

- Apple Partnership: In a landmark deal, Apple announced it would use Gemini as the foundational model to power the next generation of Siri, bringing Google’s AI capabilities to billions of Apple devices.